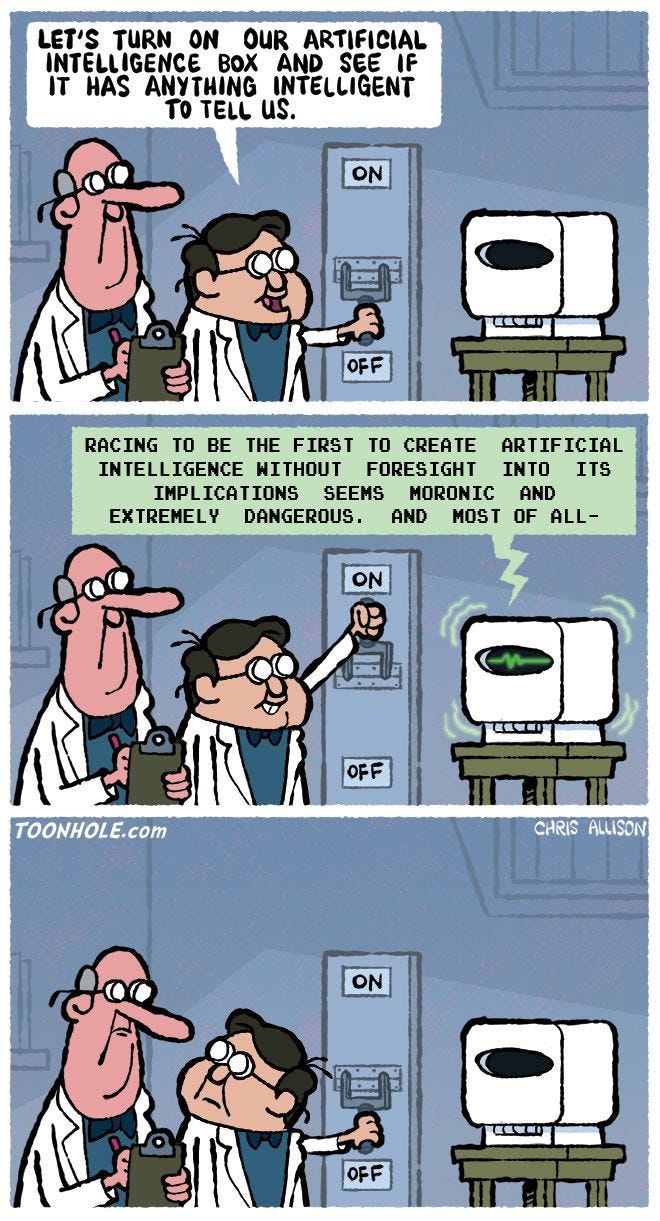

AI, AI Captian!

Artificial intelligence, Machine learning and Data science have been some of the most used words of the decade. Almost all of us have come across WhatsApp forwards with quirky news like ‘AI can now procreate its own AI’. If you are using a smartphone, you are interacting with AI whether you know it or not. From the obvious AI features such as the built-in smart assistants to the not so obvious ones such as the portrait mode in the camera, AI is impacting our lives every day.

Here, we bring to you, young Inventors, Humanitarians and Entrepreneurs who have led the quest to transform the world with AI.

Leila Pirhaji - ReviveMed

Leila Pirhaji built an AI-based tool for measuring tiny molecules in the body called metabolites, and her work could help us better detect and treat diseases. Measuring and identifying metabolites is expensive and time-consuming, and fewer than 5% of metabolites in a patient can be identified using common technologies. So Pirhaji developed a platform that uses machine learning to do it much more quickly. First, she built a huge database of all known information about existing metabolites and how they interact with various proteins and other molecules. Then her team collected tissue and blood samples from patients with known diseases and measured the metabolites. Her platform was able to analyze the data, understand the complex connections between diseases and metabolites, and use this information to discover new drugs. When she tested it in a mouse with Huntington’s disease during her PhD at MIT, her team learned new mechanisms for the disease and discovered new potential ways of treating it.

Andrej Karpathy - Telsa

Getting computers to ‘see’ has been an ambition of countless computer scientists for decades. Few have come closer than Andrej Karpathy, whose approach to deep neural networks allows machines to make sense of what is happening in images.

By combining CNNs with other deep-learning approaches, he created a system that was not just better at recognizing individual items in images (say, a dog or a person), but capable of seeing an entire scene full of objects—multiple dogs and people interacting with each other—and effectively building a story of what was happening in it and what might happen next. Typically, self-driving vehicles scan their surroundings with expensive laser range finders, build a virtual map, and then use AI to make decisions about what to do. Tesla’s approach uses traditional cameras. Not only can Karpathy’s method let the car spot objects in the road as a human driver would, but it can take in the entire scene (cars, people, intersections, stop signs, and more) and—if it works as intended—instantly infer what’s taking place.

Manuel Le Gallo - IBM Research

Training a typical natural-language processor requires so much computing power that it emits as much carbon as the life span of five American cars. Training an image recognition model releases as much energy as a typical home puts out in two weeks—and it’s something that leading tech companies do multiple times a day. Much of the energy use in modern computing comes from the fact that data needs to be constantly transferred back and forth between the memory and the processor. Manuel Le Gallo is working with a research team at IBM that’s building technology to enable new kinds of computing architecture that aims to be faster and more energy-efficient while still maintaining the precision. Le Gallo’s team developed a system that uses memory itself to process data, and his team’s early work has shown they can achieve both the goals- a satisfactory precision and strike huge in the energy savings. The team recently completed a process using just 1% energy as when the same process was performed with conventional methods. “What will change is we will be able to train models faster and more energy efficiently, which will definitely reduce the carbon footprint and energy spent training those models,” Le Gallo says.

Katharina Volz - OccamzRazor

Volz noticed a problem with the study of Parkinson’s. Experts studying the disease were specializing in particular aspects of it and generally didn’t know much about and couldn’t engage with other aspects. These academic silos made it hard for new insights to be properly shared and explored. That’s where machine learning comes in. Volz realized AI could do a better job than a human at reading all the different papers and datasets published on a topic and identifying insights that could lead to breakthroughs. Thus, in 2016, OccamzRazor was born. The company is tackling the problem in two major steps. First, it has developed programs that read and understand published materials on Parkinson’s. Next, it is using AI to integrate genomics, proteomics, and clinical data sets. The goal is to predict new pathways and genes important to Parkinson’s that can then be tested in the laboratory. Volz and her team also have plans to scale up the platform to build comprehensive knowledge maps for other complex diseases related to the ageing of the brain.

Jiwei Li - Shannon.ai & Zhejiang University

Jiwei Li applies deep reinforcement learning—a relatively new technique in which deep neural networks are used to build RL models—to natural language processing (NLP). By using deep reinforcement learning to identify syntactic structures within large pieces of text, Li made machines better at extracting semantic information from them. Li’s machine-learning algorithms find the grammatical structure of a sentence to get a much more reliable sense of the meaning. They have become a cornerstone of many NLP systems. Li’s company, Shannon.ai is developing machine-learning algorithms that extract economic forecasts from texts including business reports and social media posts. Li has also applied deep reinforcement learning to the challenge of generating natural language. Li’s techniques give AI a good grasp of linguistic structure.

Bo Li - University of Illinois at Urbana - Champaign

“Adversarial attacks”—manipulation of input data that looks innocuous to a person but fools neural networks—had been tried before, but earlier examples had been mostly digital. For instance, a few pixels might be altered in an image, a change invisible to the naked eye. Li was one of the first to show that such attacks were possible in the physical world. Li also devised subtle changes in the features of physical objects, like shape and texture, that again is imperceptible to humans but can make the objects invisible to image recognition algorithms. Her goal is to use this knowledge about potential attacks to make AI more robust. She pits AI systems against each other, using one neural network to identify and exploit vulnerabilities in another. Li then develops strategies to patch these flaws and defend against future attacks. Adversarial attacks can fool other types of neural networks too, not just image recognition algorithms. For example, imperceptible tweaks to audio can make a voice assistant misinterpret what it hears. Some of Li’s techniques are already being used in commercial applications. IBM uses them to protect its Watson AI, and Amazon to protect Alexa. And a handful of autonomous- vehicle companies apply them to improve the robustness of their machine-learning models.

Miguel Modestino - NYU

Miguel Modestino has cleared a major hurdle in electrifying the chemical industry, which produces compounds used in everything from plastics to fertilizer. His AI-based system teaches itself how to optimize the reactions for making various chemicals by zapping them with pulses of electricity instead of the conventional approach of heating them. In an early lab project, Modestino’s team achieved more than a 30% boost in the production rate of adiponitrile (which is used in making nylon, among numerous other industrial processes)—a greater improvement than any other method has shown in the last 50 years. The key was using complex pulses of electrical current at constantly varying rates to optimize yields. Figuring out what patterns of pulses to use required machine learning. Modestino and two former students recently founded Synthetics to apply the AI system to other chemical processes, like those involved in generating hydrogen fuel and making polymers.

An Article By: Coding and Logic Team

DISCLAIMER

Shaastra TechShots’ publications contain information, opinions and data that Shaastra TechShots considers to be accurate based on the date of their creation and verified sources available at that time. It does not constitute either a personalized opinion or a general opinion of Shaastra or IIT Madras. The information provided comes from the best sources, however, Shaastra TechShots cannot be held responsible for any errors or omissions that may emerge.